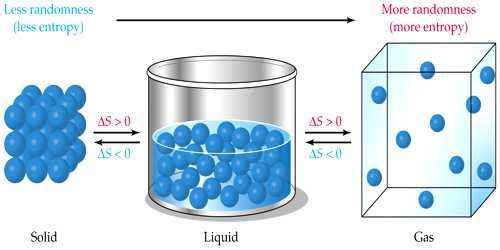

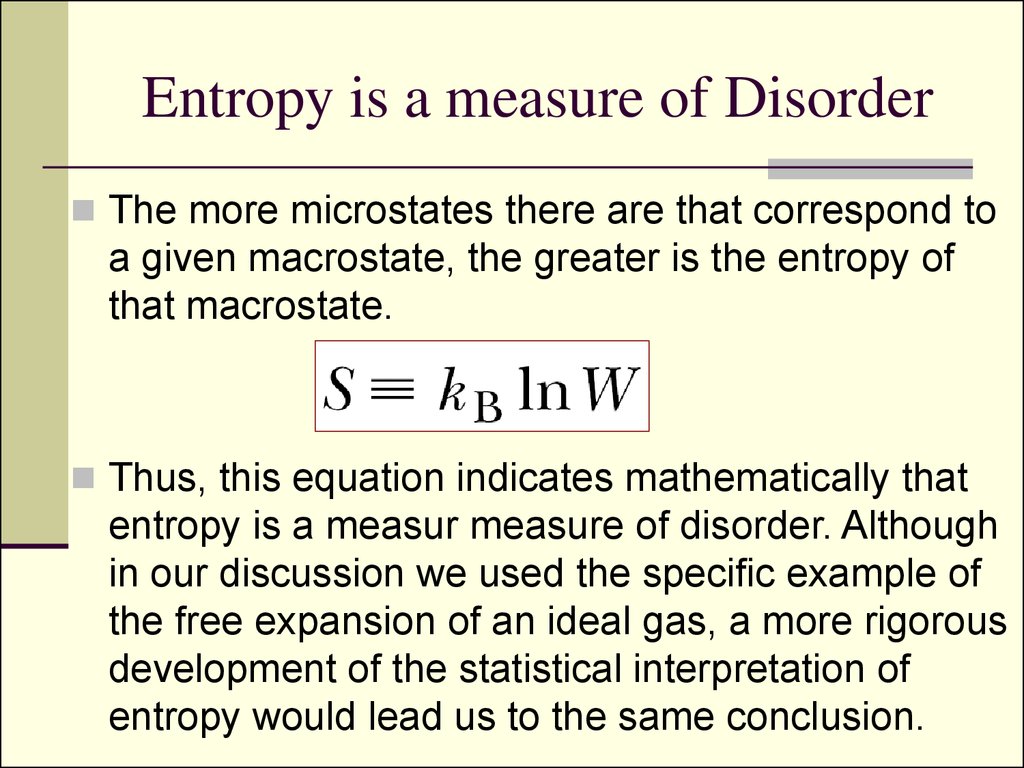

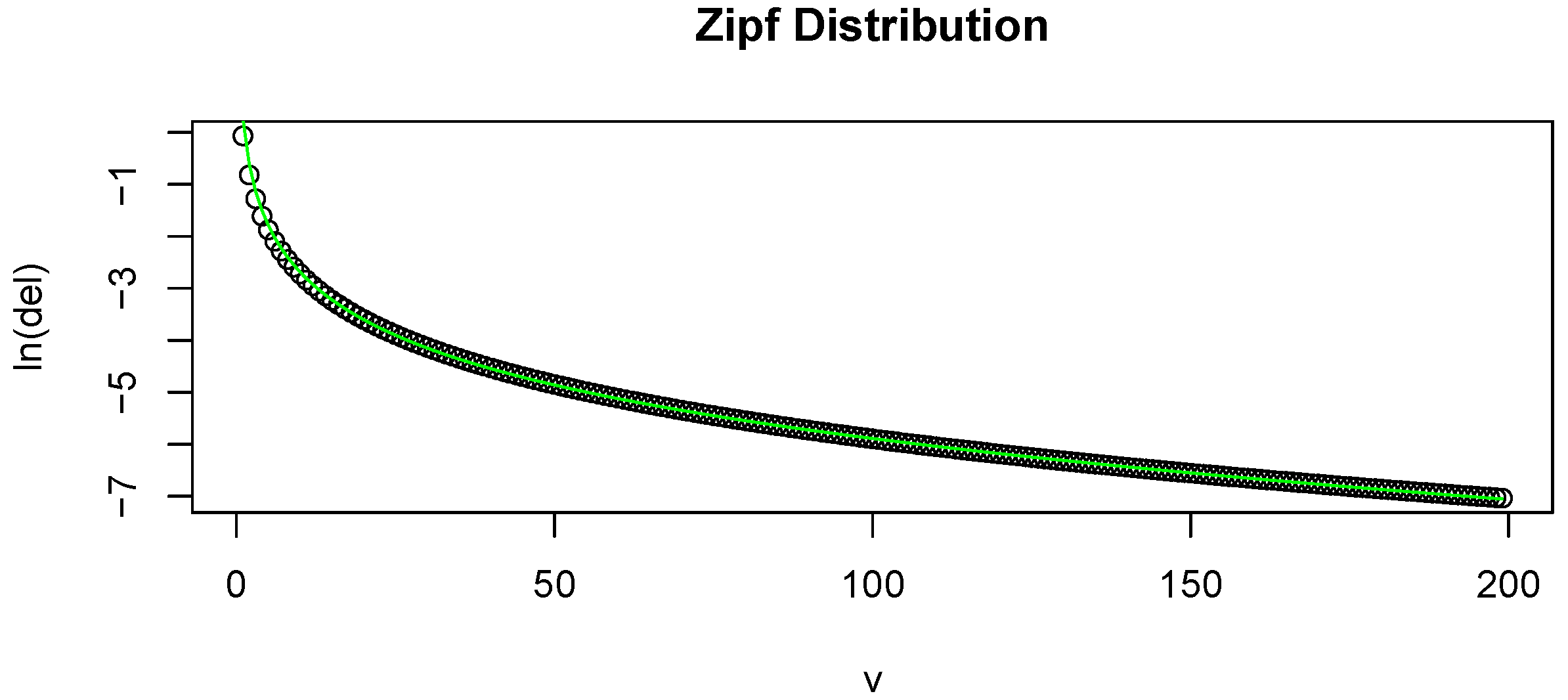

Jaynes himself distinguishes at least 6 different types of entropy. Distance Based Entropy Measure of Interval-Valued Intuitionistic Fuzzy Sets and Its Application in Multicriteria Decision. Like the concept of noise, entropy is used to help model and represent the degree of uncertainty of a random variable, such as the prices of. Confusion over the different meanings of this word, already serious 35 years ago, reached disaster proportions with the 1948 advent of Shannon's information theory, which not only appropriated the same word for a new set of meanings but even worse, proved to be highly relevant to statistical mechanics. Entropy is a quantitative measure of randomness. Jaynes in his article The minimum entropy production principle points out at the confusions resulting from using word entropy for quantities that actually differ mathematically or have different physical content:īy far the most abused word in science is "entropy". This entropy takes zero value in a pure state, which si why it can be used as a measure of has brought up in the comments this article, where both concepts are used: a complex entangled system is thermalized, and the entanglement entropy becomes thermodynamic entropy (as the entanglement vanishes). No assumptions about the size of the system or the particular form of the density matrix are made.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed